2026-04-07

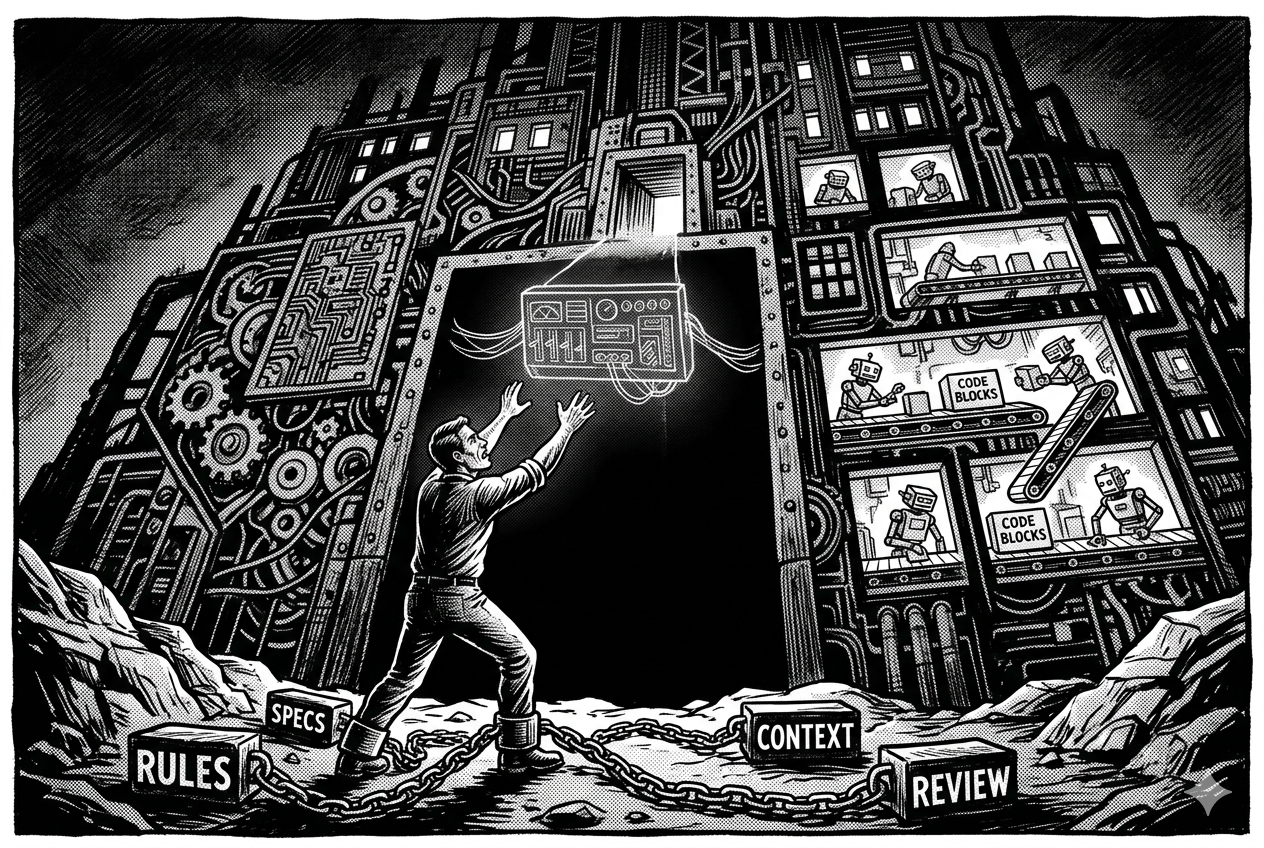

The Gravity Between You and the 100X Dark Factory

I heard Nathan B Jones talking about the concept of a living resume and how he thought the current hiring model was toast. The idea stuck with me. I expanded it into a product called Profyle: a professional profile platform with three layers of depth. A scannable surface view, expandable stories behind each role, and an AI conversation layer where visitors can ask questions about your background. LinkedIn discovers you. Profyle helps people understand you.

I decided to use it as an experiment. Could I build it with full AI autonomy?

Dan Shapiro drew an analogy to self-driving car levels and came up with five levels of AI-assisted development. At Level 4, the human writes specs and crafts skills. The AI implements. At Level 5, you have a “Dark Factory”: specs go in, shipped software comes out, no human in the execution loop. The term comes from lights-out manufacturing. StrongDM shared a public example of this: three engineers, no human-written or human-reviewed code, external test scenarios as enforcement.

I aimed for Level 5. I landed at Level 4, with Level 5 moments on the mechanical parts. This is what the attempt looked like and where the boundary actually sits.

Before the Factory

Before any AI touched any code, I had to figure out what to build:

- Product and market research (some automated with AI)

- Product definition and intention statement

- Architecture document

- Broke it all down into implementable specs as GitHub issues

Those added up to the MVP.

The factory doesn’t know what to build. I did.

The Factory

I didn’t just build Profyle. I built a factory model using Claude Code’s capabilities (rules, skills, subagents, and hooks) to see how close to autonomous I could get. At Level 4 and above, building and tuning that system IS the job.

Rules (24 files) auto-load by file context and cover code style, architecture, testing standards, and operational guides. Failures during the project fine-tuned them, some led by me, some by agents recognizing issues and amending the rules themselves.

Skills (8 Profyle-specific) are pipelines for repeatable workflows. /profyle-ship goes from board issue to merged PR in one command: branch, TDD, review, PR, merge.

TDD actor separation means the agent that writes tests is never the agent that writes code. The tester doesn’t know the implementation. The coder doesn’t write the tests.

Convergence loops are resolution-based quality control. The loop continues until every finding is resolved, not after a fixed number of iterations. A circuit-breaker prevents infinite loops.

These patterns came from prior projects. New problems came up as they were adapted for longer-running autonomous sessions.

The Numbers

| Metric | Value |

|---|---|

| Commits | 514 |

| Active development | ~8 days |

| Rules files | 24 |

| Skills (total / Profyle-specific) | 40 / 8 |

| Test files | 181 |

| Test code | ~24,000 lines |

| App code (Ruby) | ~5,400 lines |

| Test-to-code ratio | 4.4:1 |

| ViewComponents | 28 |

| RubyLLM Agents | 9 |

| Models | 19 |

| Controllers | 48 |

Testing coverage is high, but some of the early tests were duplicative or too verbose. Those were written before the TDD rules were optimized.

What Worked

Work was organized into milestones (M0: Skeleton through M6: Go Live). Each issue was scoped to be individually implementable from the spec alone. The agent can’t ask clarifying questions, so ambiguity meant expensive guesses. Only issues marked “Ready” could enter the pipeline.

The feedback loop was a big part of making this work: bad output got diagnosed, distilled into a rule, and didn’t repeat. A couple examples of what that looked like:

- AI refused to use WebMock/VCR, stubbed API calls with invented payloads, tests passed with zero connection to reality. Fix: forced VCR for real response recording.

- RubyLLM schema DSL missing

objectwrapper generated wrong JSON structure with no compile-time error. Fix: mandatory unit test onto_json_schemaoutput for every schema.

What Didn’t Work

UX

Unlike backend code, where the AI arrived at quality solutions with the right rules and context, UI and UX were a different story. Same access to information, but the output quality didn’t follow.

A Claude Code skill called ui-ux-pro-max gave the AI a design intelligence framework for making better style and pattern decisions.

I also added simple_form and ViewComponent with DaisyUI helpers to narrow the decision space: card here, badge there, textarea instead of text input. Less guessing.

It all improved the output, but I still directed creative decisions throughout. Clearest Level 3 territory in the project.

Test Quality

The factory wrote a lot of tests, but early quality was uneven. System tests targeted isolated UI elements instead of complete user workflows. Edge cases were missing. Mocks and stubs were overused, obfuscating bugs in some cases.

I fixed this through interactive sessions where I diagnosed what was going wrong and tightened the testing rules over time. Later output was solid.

Review Skipping

The most expensive mistake in the project. The ship pipeline has a dedicated reviewer subagent, but the orchestrator would sometimes do its own review instead of spawning it. In one early session, it skipped the reviewer on 17 of 18 PRs. The sign-in button broke across three consecutive PRs because the reviewer never ran.

I tightened the rule language and added a prerequisite checkpoint: the orchestrator must verify that reviewer output exists before creating a PR. After tightening, reviews happened. But it’s all instruction-based. There are no hooks or system gates enforcing it. The AI reads the rule and chooses to comply.

That’s the limit. The AI supervises itself.

Where I Actually Landed

While the initial MVP fell short, it was a good foundation that needed additional attention. I spent a few days in QA and corrective cycles. Some ideas needed rethinking.

Here’s where each layer actually landed:

| Area | Level | What that meant |

|---|---|---|

| Models, controllers, services, AI integration | L5 | Spec in, merged PR out |

| Overall project (specs, rules, diagnostics) | L4 | I drafted specs, diagnosed gaps, tuned rules |

| UX and product strategy | L3 | Tight conversational iteration |

Level 4 worked because of iterative rule-hardening: ship, find a gap, write a rule, ship better. The factory improved because the rules got more precise, not because the AI got smarter.

The gap to Level 5: the factory mostly can’t find its own problems, and the AI supervises itself through the process. The rules are the most transferable artifact. They encode hard-won decisions and carry forward to the next project.

The Path to Level 5

Tools like Claude Code, Gemini, Codex and others can’t do Level 5 yet. The AI supervises itself: you give it a skill with 10 steps and trust it to run them all, including reviewing its own work. Subagents and hooks only get you so far. The AI is still the orchestrator.

Level 5 needs an external runtime controlling the process while the AI handles focused tasks in separate sessions. This is why we’re seeing things like StrongDM’s approach and open source projects like Fabro coming about.

The external orchestrator handles the flow, and people with deep industry experience like Alex Bunardzic and Bryan Finster can now encode their methodologies into the AI’s configurations: TDD discipline, continuous delivery, fast feedback. If Alex wants a test mutation step in the pipeline, I believe he’d get it with this approach. I’m looking forward to trying it.

I knew what Level 5 was about through reading. Doing it showed me where the ceiling actually is. Who supervises the process, not how good the AI is at following instructions.