2026-02-24

How I Came to Understand the 100x Claim

First people bragged about 3-5x productivity gains from AI, then 10x, and now 100x. Usually without much clarity beyond references to parallelism and orchestration. I’d read these claims and think, OK, but what does that actually look like? What are you doing differently that gets you there?

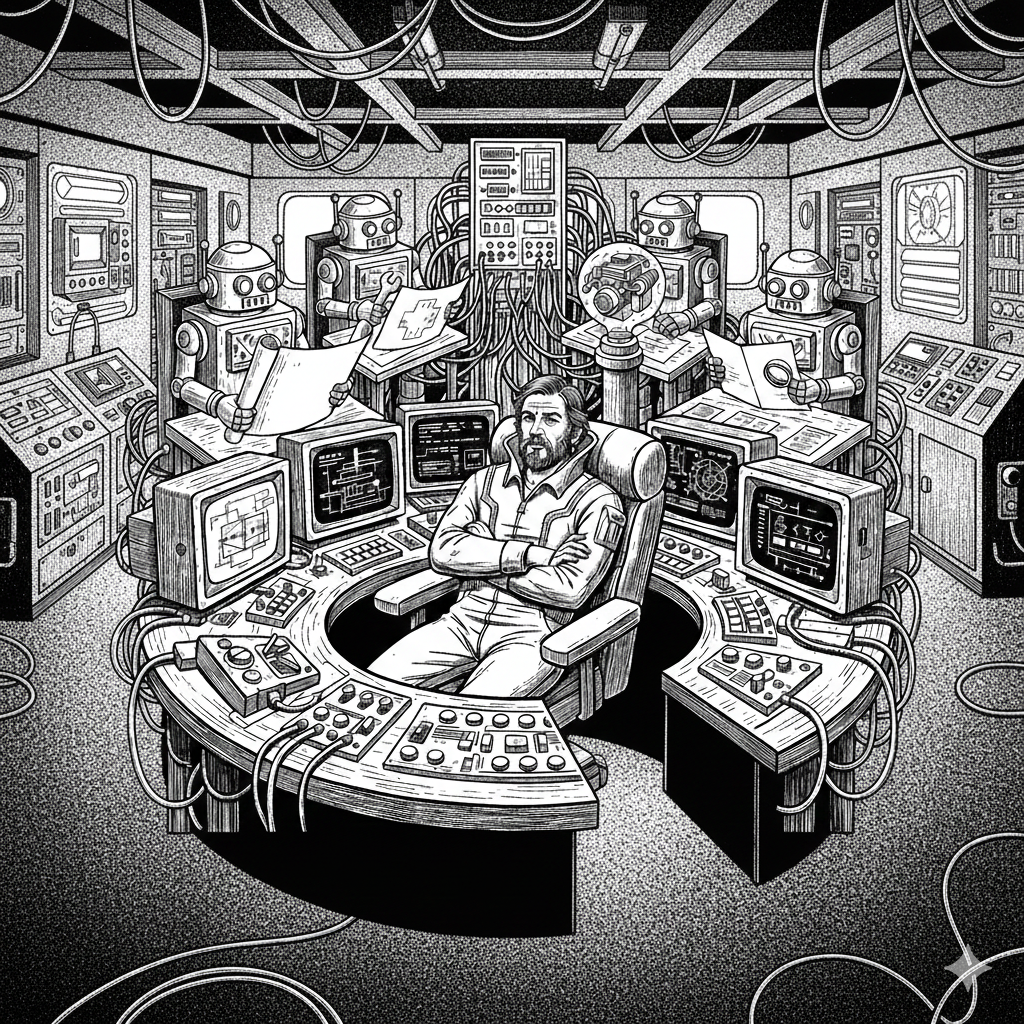

I was skeptical for a while. Then I lived through it, and I started to understand what these people are probably trying to describe. They’re mostly bad at explaining it, and I think that’s because the gains don’t come from any single technique. They come from building up an infrastructure over time that eventually changes what one person can operate.

This is how I got there.

Getting Incrementally Better

I didn’t start out great at employing AI for real work. The early months were a lot of trial and error, figuring out what Claude Code could actually handle versus what I still needed to do myself.

I got incrementally better. I wrote CLAUDE.md files with domain knowledge and coding standards, built custom skills for repeatable workflows, and learned how to spec work so agents could pick it up without a bunch of back-and-forth. I started building composable rule sets so conventions stayed consistent across projects.

As confidence grew, I got more ambitious. I joined other open-source projects. I added ancillary applications to the business. Each improvement compounded on the last: more context documented meant better agent output, which meant more confidence to delegate, which meant I could take on more scope.

Hitting the Bottleneck

The gains plateaued once I was running multiple projects at the same time. Boswell had two active codebases and production customers. Harmonic needed attention. I was maintaining a fork of the litestream-ruby gem. And I had this blog.

Switching tabs, switching console sessions, switching mental contexts. AI was helping inside each project, but I was still the bottleneck between them. Not only could I not take on much more, it was mentally taxing to juggle that many different codebases and topics at once. The gains from faster code generation had a ceiling because I could only context-switch so fast.

Exploring Orchestration Across Systems

I realized the bottleneck wasn’t the agents or the code. It was me directing everything manually. I needed to automate the delegation itself.

I built boswell-backoffice: a Fly.io VPS that runs the same Claude Code agents I use locally, triggered by GitHub events instead of me typing in a terminal. n8n listens for GitHub webhook events like issues being labeled or comments being posted. Dagu orchestrates the pipeline: post a start comment, set up the workspace, run Claude, commit the results, and post a completion comment.

Agents commit as “Boswell Agent” or “Claude Agent,” and it’s all visible in the git history. I started with a different orchestration tool (AI Maestro), replaced it with Dagu, and I’m still prototyping. The specific tools matter less than the pattern: I moved from directing agents manually to delegating through project management tools. GitHub Issues became work orders. GitHub Projects became the prioritization layer.

The issue workflow has two labels. agent-spec takes a casually written issue and explodes it into a full implementation specification. The agent reads the rough description, pulls in all the project context it has access to (CLAUDE.md files, conventions, domain knowledge), and rewrites the issue body with a detailed spec. My original draft gets preserved in a collapsed section at the bottom. Then agent-implement picks up that spec and does the actual work: write the code, run tests, commit, push, and open a PR. I can also drop an @agent comment on any issue or PR to give ad-hoc instructions.

This means I can write a few sentences about what I want while I’m thinking about it, label it agent-spec, and come back later to a full spec I can review and refine before kicking off implementation. The agent does the tedious part of translating intent into detailed requirements, using the same project knowledge I’ve been building up all along.

What agents have shipped through this pipeline

Some examples:

- Issue #252: An agent investigated a SQLite transaction rollback bug across 7 commits, documented the full investigation, wrote regression tests, and opened PR #253 (240 additions, 13 deletions).

- Issue #283: An agent implemented console subscription management for tenants, including a new controller, dialog UI, tests, and addressed PR review feedback.

- Just about anything I feel like slinging through this instead of kicking off locally at the console.

The Litestream Incident

This is where it clicked for me.

Boswell’s production data volume hit 100% full. Litestream replication staging directories and WAL files were consuming about 906MB of a 974MB volume. The actual databases were around 2MB. I’d been seeing recurring SQLite errors across multiple issues, and the root cause turned out to be Litestream v0.3.13, which had known unbounded disk growth bugs that were fixed in v0.5.x. The upstream litestream-ruby gem was still bundling the old version, and the maintainer was unresponsive and not taking pull requests.

All of this happened on the same day (2026-02-22):

- I wrote issue #276 with the full spec for what needed to happen.

- One agent forked litestream-ruby, upgraded the binary from v0.3.13 to v0.5.8, added a

litestream:downloadrake task, updated CI and docs, and bumped the version. It used proper PR workflow on the fork. - Another agent picked up the fork in boswell-app, updated the Gemfile, converted the config format, updated the runtime config service, fixed the Dockerfile through 4 iterative attempts, and got the full test suite passing.

- I reviewed the work, merged, and deployed. Production issue resolved.

Two different codebases, two different problem domains (gem internals vs. Rails app deployment). Without the orchestration, I’d be manually reading gem source code, forking, upgrading, then context-switching to boswell-app to update config and debug Docker builds. It starts to hurt my head after a while.

With delegation, I wrote the spec, kicked it off, and reviewed the output. The agents handled the cross-codebase coordination and the iterative debugging themselves.

Agents Reviewing Each Other

I run two agents in a write/review loop. Both have access to the same conventions and standards through CLAUDE.md files, rules, and skills. They iterate until the work meets the standards. The responder has an out if the reviewer makes unreasonable calls, acting as a circuit breaker so they don’t loop forever.

The first result from a spec is generally good, but there’s usually some tech debt you don’t want to let accrue into something bigger. The review loop dials it in before I ever see it.

I’ve heard some claim this kind of song-and-dance routing is defensive and an indication of how weak the technology is. My opinion: so what, it works, it’s fast, and I’ve seen people on actual teams cause worse problems.

PR #253 shows the full loop. The investigation was documented, the fix was implemented, regression tests were written, and review feedback was addressed, all before I looked at it.

Trust and Staying in the Loop

Trust didn’t come automatically. I had to build it up over time by watching agents work and verifying results. Early on I reviewed everything closely, which was the right call. As the conventions matured and the review loops proved reliable, I gradually shifted to reviewing final output instead of watching every intermediate step.

I’m still the final arbiter on every merge. At my scale, that’s not a bother, so I’m not letting go of that yet. Besides, the review process surfaces opportunities to update agent knowledge. Sometimes I spot a convention gap or a better pattern that should be documented. That feedback loop improves future output, and agents also pick up on improvements implicitly as the codebase evolves.

I suspect a lot of people are in the early trust-building stage. The systems that make delegation work aren’t obvious until you’ve built them and lived with them for a while.

Coming to Understand the Claim

I don’t think it’s literally 100x. Not to me, anyway. But compared to a decade ago, even a year ago, what I’m able to do as one person is kind of astounding.

Some of the gains have nothing to do with typing speed. They show up in being able to:

- Delegate across codebases I’d otherwise have to context-switch between manually

- Run business operations through the same project management tools as code work

- Let agents handle the iterative debugging and review cycles

- Take on more projects and more scope without burning out

None of that shows up if you measure “time to write a function.” It shows up when you look at what one person can operate across a month.

What I Can Refine From Here

The orchestration layer is still a prototype, and I can see building a solid application around it. Queue concurrency is limited to 1 because of VPS constraints, but that’s fine at my scale. A ton more could get done with vertical upgrades alone.

There’s room to improve: smarter issue routing, more robust review loops.

The infrastructure for conventions and knowledge keeps paying dividends as it matures. Each project I add gets easier because the patterns are already documented and the agents already know how to follow them.

Where This Leaves Me

I came into this skeptical of the 100x claim. After building the infrastructure and living with it, I get what people are trying to say. They’re just not always articulating the details, and most don’t seem to mention the supporting systems you need to build before any of it works.

The gains are real, they’re compounding, and they’re operational. They’re not just about writing code faster. I’m one person running a multi-project business with production customers, and the path forward keeps getting clearer. Mature the orchestration from a string-together prototype into a solid app. Then move on to growth wheels, marketing automation, or really anything else I feel like doing.